C&C 2019

Hybrid Microgenetic Analysis

Hybrid Hand

In Human-Computer Interaction, we have seen skills develop around the keyboard, pen, and mouse, yet the hand and body can communicate much more richness and nuance than what is being captured. We explore such research areas like:

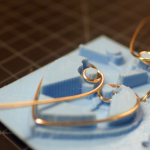

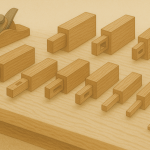

- Smart Tools – Every discipline has developed tools that capture rich body-based input. How might we incorporate new form factors like brushes, pipettes, pipe bags, carving tools, or wheels and expand the way we work with hybrid materials?

- Embedded Systems – How might we integrate sensor technologies to provide us more information about our interactions with materials such as foams, liquids, emulsions, and solids?

- Augmented Reality Interfaces – How might we improve the information bandwidth of tools? Can smart tools communicate via haptic, sonic, or voice cues?

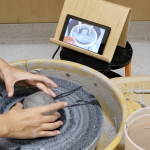

- Skill Acquisition Data Mining – How might smart tools help us acquire tacit skills and share this knowledge with others?

- Computational Ethnography – How might we leverage data from sensors or activity logs to make sense of what is occurring to better design tools and interactive systems?

- Affective Computing – What might biosignals (electrodermal activity, heart rate, EEG) tell us about how practitioners regulate emotion or cognitive load to maintain themselves in focus or flow?

Associated Publications